There’s been a lot of chatter about UWP lately, it’s future, and some people even going as far as calling it dead after last week’s MSBuild2019 in Seattle. I spent a lot of the time at the conference talking to a lot of stake holders about the future plans, and trying to wrap my head around where things are headed, and giving my feedback about the good and the bad. There’s definitely a lot of confusion here, and I think the Windows team is really stumbling trying to build a good developer story, and really have since Windows 8.0. Why WPF and UWP is under the Windows division and not under the Developer division (who rocks their developer stories) is beyond me.

What is UWP really?

Anyway I think one thing is starting to become more clear. We need to stop talking about UWP as one framework that runs as one type of app. What we need to do is start talking about the bits that is used to make up UWP. In one way you could say UWP is dead as we know it. UWP has had some limitations holding it back, but there are also many great things about UWP too. And to make matters worse, what UWP is has been blurred by confusing messaging, things that weren’t UWP before suddenly maybe sort of are also sometimes called UWP, and there’s been a lack of properly communicating what is really happening to UWP. I think that’s why people like Paul Thurrott saw an opportunity to write a click-bait article announcing the death of UWP right after lots of exciting things are announced about UWP’s future.

So what is UWP? Is it an app in the store? Is it an app only using the WinRT APIs? Or one that relies of some of the WinRT APIs (as well as others)? Is it a sandboxed app? Is it an app that has an app identity with all the features that brings? Is it an .appx/.msix file with clean install/uninstall? Is it an app that uses the newer XAML UI Framework? Is it an app that can run on all sorts of Win10 devices (XBox, HoloLens, Phone, IoT Core, Surface Hub etc)

Historically it’s probably been an app that fits almost all of these. But lately not so much. What we’ve been seeing lately is that UWP is being broken up in to many parts:

- You want the packaging story? You can MSIX it all, and put your Win32 or “UWP” app in the store. Don’t want the store? You still get the benefits of packaging, easy updates and deployment outside the store. This is probably why .NET Framework apps packaged up as MSIX/AppX apps was also called UWP apps: You got some of the UWP features like package ID, live tiles, push notifications etc. yet again muddying what UWP is.

- Do you like a lot of the new WinRT APIs like Bluetooth, push notifications, etc? Well with the SDK Contracts, you can now easily use those in your .NET Framework apps (or any other Win32 app). Just reference the contracts sdk from nuget and you’re good to go:

- You like UWP’s UI Model? Well WinUI is coming to the rescue, allowing you to use the UI Framework either on top of the the Windows Runtime / .NET Core or Win32 / .NET Framework (think XAML islands, perhaps without the islands in the future). UWP’s UI on Win32 running outside the sandbox could be a serious contender for replacing for WPF. It’s all the benefits of a new UI framework that can move fast without being limited by .NET Framework or Windows upgrades, but will run down-level too. Remember: WPF is about 20 year old tech by now.

- Do you want to use .NET Core but still be running in the sandbox with all the previous UWP features? Well that’s coming too with the .NET 5 announcement. One .Net to rule them all.

So talking about UWP like we used to just doesn’t make sense any longer. I don’t want to talk about UWP XAML. I want to talk about WinUI Xaml on top of whichever framework I want (.NET Native, .NET Core, .NET Framework). Or MSIX when we are talking deployment (btw watch this session). Or WinRT/Windows SDK when we are talking about calling the Windows Runtime APIs. So in a way UWP is dead. Long live the bits and pieces of UWP.

.NET Framework and .NET Native is dead.

There I said it.

To be clear: when Microsoft says something is dead, it means complete and utterly out of support. When the community says something is dead, it means it’s in maintenance mode only, just getting bits and pieces of security and hotfix patches. You won’t see anything new and cool there, and you’re sitting on a ticking time-bomb. By all means keep your project on it if you’re yourself in maintenance mode. Otherwise move. Microsoft doesn’t want to freak out customers by saying something is dead, and I get it. It’s a big scary word.

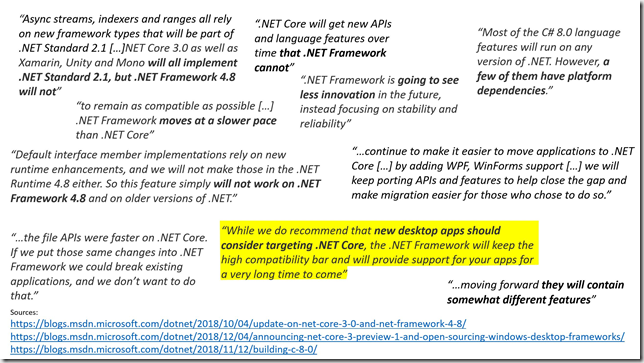

Here’s a slide from one of my .NET Core talks. They are all quotes from Microsoft blogs. You don’t have to read much between the lines to see where things are headed.

With the announcement of .NET 5, .NET Framework and .NET Native is at a dead stop. .NET Core 4.0 will be renamed .NET 5 (to avoid the confusion – as I said earlier the dev division are really good at developer stories!), and in addition bring in various features from Mono, like AoT compilation, similar to what .NET Native in UWP offered. So in a way Mono is dead too, but the features it has that .NET Core doesn’t will be brought over, so it’s more of a merge between the two. Finally we only have more or less one .NET Runtime to worry about. Woohoo.

WinUI 3.0 – the future or death of the UWP UI Stack?

This was one of the bigger stories around the UWP stack (apart from getting .NET Core support) See the session here for the details. Historically using new UWP UI Features has been a really big problem. Let’s say you want to use Xaml Islands in UWP. It just went final in 1903. Unfortunately that means you can’t really use it at all, because most of your customers haven’t upgraded yet. You’ll likely have to wait two years before you can use it. WinUI has been solving this lately with a lot of controls. Want to use the latest features of the TreeView control? Well either set you min-version to 1809, or just pull in the WinUI 2.1 nuget package and you can now use the TreeView control down to 1607. However note that you need to change the TreeView control’s namespace from Windows.* to Microsoft.* (so it doesn’t clash with the built-in WinRT TreeView). No big deal – that’s quickly dealt with. And it’s awesome. But all the controls are not there, like the brand new Xaml Island stuff. You need 1903. No way around it.

To solve some of those problems, Microsoft would have to lift the entire UI stack, preferably all the way up to the base UIElement class to solve this. That means the entire UI Framework would be decoupled from the Windows OS, and you’d be able to use all the features on almost any version of Windows 10. This is what was announced they’ll do over the next year, with a preview end of this year (and they’ll even open-source it!), and be released as WinUI 3.0. I got really excited about this, as that meant I could always use the latest greatest features of .NET Standard 2.x+, .NET Core and the UI Stack, without having to drop support for older versions of Windows. Super exciting, and a great move.

Well… until you start digging into the details. The story quickly fell apart when I realized what this really means. Remember how I said to use the WinUI TreeView control all you had to do was change namespace? That works because the WinUI TreeView control still inherits from Windows’ UIElement class, so you can insert it just fine into the existing UI hierarchy.

But what the Windows team intends to do is also change the namespace that UIElement base-class resides in. Suddenly you can’t mix and match new and old UI controls. Once you opt in for WinUI 3.0, ALL your UI must change to use this new UI stack. That means this isn’t the old UWP UI Framework. It’s an entirely new non-compatible one.

Now you’re probably going “no big deal – I’ll just CTRL+H replace all the namespaces and I’m done”. Sure, I hope you’re that lucky, but what if you’re referencing a 3rd party component, and that component hasn’t been updated to use WinUI yet? Well now you’re stuck. Components like Telerik, Windows Toolkit, ArcGIS Runtime, Xamarin.Forms etc all needs to create a breaking-change update that supports the new UI Model. And at the same time, they have to likely support the old UI stack for the customers that are not ready to move yet. And UWP has historically struggled, so I don’t find it unlikely some component vendors will decide just to drop support for it (or wait and see if it catches on), rather than trying to justify the resources needed. And I’m not clear on how us component vendors will even do this, as the TFM will likely be the same (or multiple) and we’ll get clashes between the old and new UI stacks. I’m scared UWP won’t survive a complete reset of the UWP UI stack. On the other hand, it also seems like a necessary move.

Now one of the reasons to bring the UI stack out of band and be able to pick any version is also to be able to more quickly innovate what XAML is and can do, while getting quick adoption of new features from the down-level support it brings. Wait did you say innovate XAML? A language that hasn’t really evolved since it was conceived - and in the process hopefully making me more productive? Oh yes please! Sounds great. Until you’re then told, that by bringing it out of band Microsoft gets the freedom to make more breaking changes without hosing existing users to be able to do this (in order to innovate more). Well back to the component vendors: You just made their life exponentially worse: They now have to rush-out updates for each major version of WinUI and support multiple different versions of WinUI because some users might not be ready to upgrade and deal with all the breaking changes. Forcing breaking changes on the entire ecosystem should be an absolutely big no-no. You could probably ssssmaybe pull it off once, but from then on you really need to stabilize for a very long time, or the entire ecosystem is going to drop out one-by-one. If you ever had to maintain multiple versions of the same product, you know how painful, frustrating and resource intensive this can be.

One thing that really bugged me was that the huge namespace breaking change was not clearly communicated at Build – especially the repercussions it creates – it was literally glossed over as just a quick little rename here and there, and it took talking to a lot of people before this really clicked for me. I later learned there was a roundtable discussion on this for select invited people, so it’s definitely not a surprise for the Windows team. IMHO this really needed to be a more open discussion from the get go and get the community to buy in to this, with the pros and cons discussed more openly.

I did get the chance during Build to discuss a lot of the different ways it could be done and the pros and cons, like don’t bring UIElement out into WinUI, but everything below, but that could hold back innovating on XAML, or break often move quick, but that could kill off the ecosystem, keep compatibility 100% but that would hold back any future change, etc. All of them had pros and cons that sucked, and I’m honestly not entirely clear myself what the right answer is (hence why I think we need big open discussions about all the possibilities). The only thing I would put my foot down on hard: Definitely no more than one breaking change (except for edge-cases / security reasons like how .NET Core has historically done it). It really scared me that frequently breaking everyone was even on the table for discussion.

I also talked a lot to the Xamarin.Forms team about this: They seem just as confused about what to do with this, and they would be forced to add breaking changes too, also hosing all their 3rd party class libraries that have UWP specific renderers. The ripple effects of these WinUI changes are not small at all.

Wrapping up

So where are we at today?

Well first here are some of my concerns:

Wrt. WPF and WinForms. It’s awesome that it’s going open-source on top of .NET Core, but to be honest it’s very unclear how much it is going to evolve past getting it on top of 3.0. They are clearly committing to bring it to .NET Core. But it’s not clear if they are wanting to innovate beyond that, apart from bug fixes and various tweaks. Will we get proper DirectX 11 and composition support? Will we get x:Bind support? Will XAML innovate in WPF? Will we even get any new APIs? Time will tell if this is just a port to .NET Core and then back to maintenance mode, or if it’ll be more than that. No one seemed to be willing to commit to anything when talking to them.

Wrt UWP: It is clear they want to bring the platform forward. The change they’re doing to uncouple much of UWP from Windows is sorely needed to save UWP. But it is not clear how they are going to do it, or how it is going to affect the entire ecosystem. In the process of saving UWP they might just risk killing it off, unless they get a really good migration story and the entire component ecosystem on board quick.

So what should you do with all this stuff going on? Well here’s my recommendation: Whether you do WPF, WinForms or UWP, if it works for you now, continue the course you’re on. You can’t plan for the unknown anyway, and Microsoft generally like to support things for 10 years. Whatever happens I doubt you’ll be setting yourself up for failure – You might just get more options later, and definitely not less. My biggest concern right now is how the changes to WinUI is going to affect the future – especially among component vendors.

Have anything to add? Please continue the conversion in the comments.

- - -

Fun bottom note: What’s happening to UWP is not that it’s being killed: Microsoft is pivoting bringing the bests parts to where we need them. This actually happened before, but it was unfortunately not used as an opportunity to perform a pivot. Remember Silverlight? Guess where .NET Core’s cross-platform CLR came from. And lots of Silverlight’s code also ended up in UWP. Had they only pivoted and said “Yeah we agree Silverlight doesn’t make sense in the consumer spare, but it’s thriving in the enterprise space, so let’s build on that, and we’ll evolve it into .NET Core and Windows Store”. Unfortunately that didn’t go so smooth, and lots of people still suffer from PTSD and wary of whatever Microsoft does that appears to be killing off any technology.